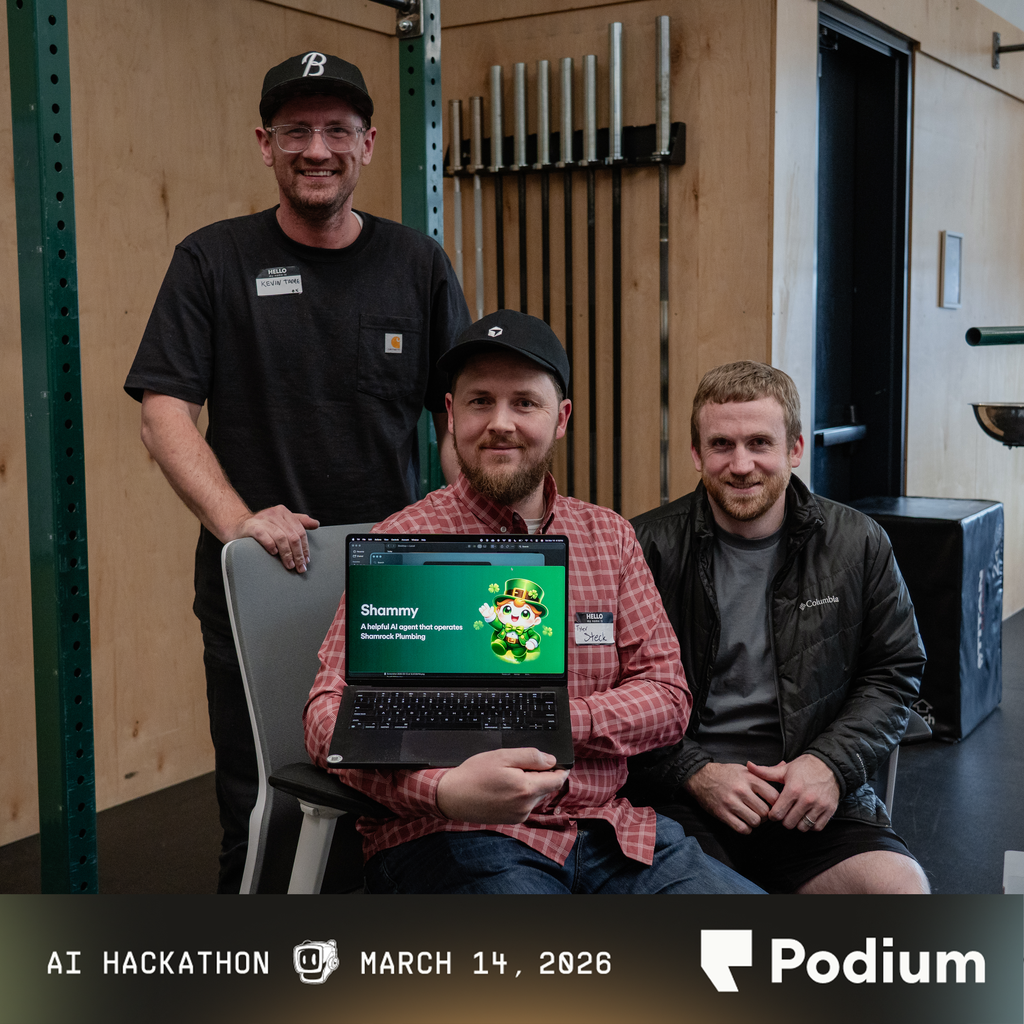

AI Hackathon at Podium

Yesterday, I attended an invite hackathon at Podium, and I had a blast. But as someone who spends a lot of time thinking about how AI actually gets implemented, I walked away with a few reflections I can't shake.

The challenge was to build something autonomous. With only a few hours to build, that's a tall order. True autonomy requires serious intent engineering and context building, and that kind of work doesn't happen quickly. Most of the demos didn't quite get there. Many were well-built chatbots following strict flows. At that point, a deterministic rules-based system could probably handle the task more reliably. One demo stood out, though. It was a system that monitored conditions and decided on its own whether to act. It wasn't flashy, and it didn't demo particularly well. But in my mind it was the most honest interpretation of autonomy. Truly autonomous systems are almost anti-demo by nature. They don't shine when someone is clicking through slides. They shine in the quiet moments when no one is watching.

That gap between demos and reality becomes even clearer when you start thinking about scale. At Vivint, we deal with enormous amounts of continuously generated data - IoT devices, cameras, sensors, and everything that comes with them. So when I see demos built around RAG and vector search, I can't help but think about what happens when those systems move into production. As your dataset grows, the vector space starts to crowd. More and more items begin to look semantically similar, and the distance between the right answer and the almost-right answers shrinks. At that point retrieval becomes less deterministic and starts to feel like a guessing game. So many of these demos spoke about the AI analyzing all of the data and then taking action, but the hard part is that context windows aren't big enough, and when you get to huge amounts of data the only viable method is RAG, which falls apart because of crowding.

Then you run headfirst into the real engineering problems: cost, latency, and the constant tradeoff between precision and recall.

Maybe I'm just coming in with a different perspective, having spent too long staring at AI product development and the limitations of the infrastructure around it. The builders at the hackathon were enthusiastic and creative. That energy matters. But I left Podium more convinced than ever that the hardest problems in AI aren't the flashy ones. They're the infrastructure problems. They're the problems of coordinating memory and context across massive systems with billions or even trillions of parameters. They're the problems of building the layers that make those models reliable, efficient, and actually useful at scale.

I think the real breakthroughs in AI probably won't look like slick demos. They'll look like better infrastructure.